The Checker Maven

The World's Most Widely Read Checkers and Draughts Publication

Bob Newell, Editor-in-Chief

Published every Saturday morning in Honolulu, Hawai`i

Noticing missing images? An explanation is here.

Jump? How High?

We've surely all heard variants of the dictate, "When I tell you to jump, you're supposed to ask me, 'How high?'" It's usually implied rather than said outright by, perhaps, a mean boss or someone who abuses their authority.

You'll see the relationship to our game of checkers in the problem below (of course without the abuse factor). The question that arises is more one of "Which way?" rather than "How high?" although the second question does also apply.

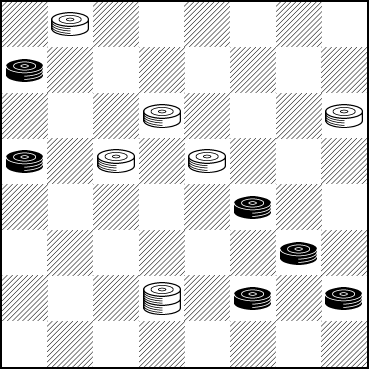

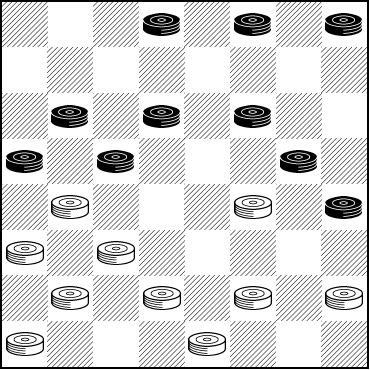

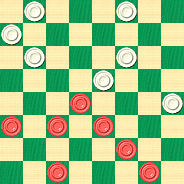

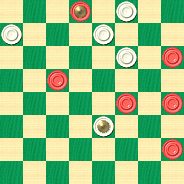

BLACK

Black to Play and Draw

B:WK7,18,19,21,23,32:B5,6,9,14,20,28

Get a jump on this one; the solution isn't terribly difficult to find but the play is interesting. When you're ready you won't have to jump your mouse very high to click on Read More to view the solution.![]()

Solution to CV-2: Brian's Bridge

Brian's Bridge

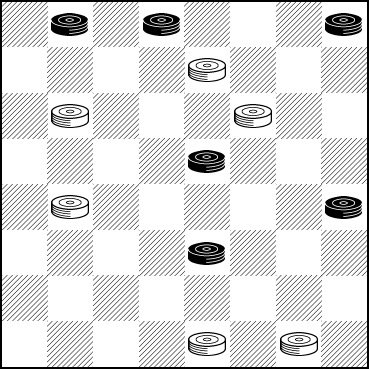

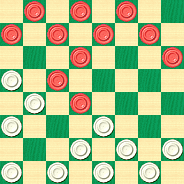

BLACK

WHITE

White to Play and Win

W:W7,9,11,17,31,32:B1,2,4,15,20,23

We hope you enjoyed solving this excellent problem.

Once again, in a problem this complex, we can only give a main line and a few variations. Feel free to explore with your computer. The following solution is the one preferred by the composer, master problemist Brian Hinkle.

1. ... 7-3---A 2. 1-5---B 17-14 3. 15-18 31-27 4. 23-26 11-8 5. 4x11 9-6 6. 2x9 27-24 7. 20x27 32x23 8. 18x27 3-8 9. 9x18 8x24 White Wins.

A---17-13? 2. 15-19 32-28 3. 4-8 11x4 4. 2x11 4-8 5. 11-15 9-5---C 6. 23-27 31x24 7. 20x27 8-11 8. 27-32 11x18 9. 19-23 18x27 10. 32x23 28-24 11. 23-18 24-19 12. 18-14 19-16 13. 14-10 16-11 14. 10-6 11-7 15. 6-2 7-3 16. 2-6 3-7 17. 6-2 7-11 Drawn.

B---15-18 31-27 3. 1-5 17-14 Same.

C---8-11 6. 23-26 11x18 7. 1-5 31x22 8. 5x23 Drawn.

As always, our thanks go out to Brian.![]()

The Almost Shortest Month

We've written before about February being the shortest month of the year, when mortgage, rent, and other monthly payments remain unchanged despite covering fewer days. Well, this February is slightly different, as 2020 is a leap year and February gets an extra day. We suppose that helps at least a little bit.

To start out this "almost shortest month" we have a relatively easy speed problem. We'll skip the Javascript clock this month and just ask you to solve it in the "almost shortest" time possible.

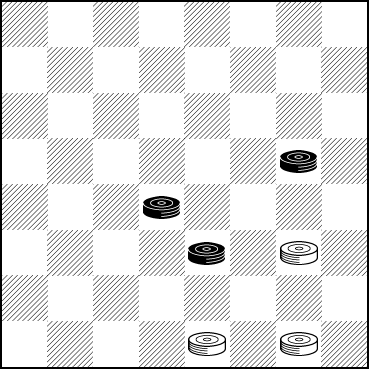

WHITE

White to Play and Win

W:W24,31,32:B16,18,23

When you have the solution let your mouse take the "almost shortest" path to Read More to verify your answer.![]()

Thanksgiving Weekend

We say it every year: Thanksgiving is our favorite holiday. It's typically American (and, of course, Canadian), it's relevant to every belief and creed, and it unites us all in giving thanks for the many blessings we have. No matter our station in life, we can all find something to be thankful for.

We like to turn to the great American checkerist and problemist Tom Wiswell for holidays such as these, and what better than a problem, arising from an actual game, that Mr. Wiswell called Dixie.

Mr. Wiswell noted, "We met with the well-known O. J. Tanner, who got us into the following predicament."

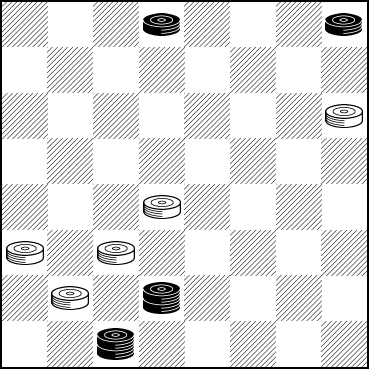

WHITE

White to Play and Draw

W:W12,18,21,22,25:B2,4,K26,K30

White may be a piece up but his options are severely limited by the two Black kings. Can you find your way out of this one? Make yourself a hot turkey sandwich (with mashed potatoes and gravy, of course) as you contemplate this one, and then--- when you've finished the sandwich and come up with a solution--- click on Read More to check your answer.

![]()

Machine Learning Comes To Kingsrow

Public Domain

Computers have progressed a very, very long way since their earliest days. It may not be quite as well known, though, that computers have been programmed to play games since almost the very beginning. But we doubt that the hardy coding pioneers of the time would have dreamed just how far the state of the art has come since then.

Certainly well known today are Google's phenomenal Alpha game playing programs, which contain self-teaching or "machine learning" methods. After years and years of computer Go programs barely reaching respectable playing levels, AlphaGo appeared on the scene and defeated one of the world's highest ranked Go players, something no one ever expected. And AlphaChess very quickly became able to defeat even the strongest chess playing programs around. Machine learning is here to stay, and the results are phenomenal.

Of course, it's not new. The idea was proposed by an IBM scientist over 60 years ago. But implementing it successfully was the issue, for to succeed, the computer programs would have to "train" on millions and millions of different game positions. That wasn't a realistic possibility until relatively recent years.

The method worked well at first for non-deterministic games--- games with an element of luck. Gnu Backgammon played at the master level, as did others. But applications to checkers largely failed. Blondie 24 was a lot of fun but never a serious competitor, and NeuroDraughts wasn't fully developed.

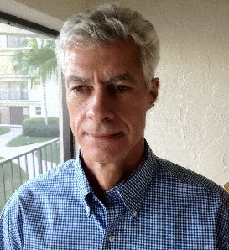

Ed Gilbert

Photo Credit: Carol Gilbert

All that has changed, though, with renowned checker engine programmer Ed Gilbert's latest developments for his world class Kingsrow computer engine. Ed was kind enough to send us the details. The following was written by Ed Gilbert with input from Rein Halbersma.

The latest Kingsrow for 8x8 checkers is a big departure from previous versions.

Until recently, Kingsrow used a manually built and tuned evaluation function. This function computes a numeric score for a game position based on a number of material and positional features. It looks at the number of men and kings of each color, and position attributes including back rank formation, center control, tempo, left-right balance, runaways (men that have an open path to crowning), locks, bridges, tailhooks, king mobility, dog-holes, and several others. Creating this function requires some knowledge of checkers strategy, and is very time consuming.

The latest Kingsrow has done away with these manually constructed and tuned evaluation features. Instead it is built using machine learning (ML) techniques which require no game specific knowledge other than the basic rules of the game. It has learned to play at a level significantly stronger than previous versions entirely through self-play games.

In a test match of 16,000 blitz games (11-man ballot, 0.3 seconds per move), it scored a +72 ELO advantage over the best manually built and tuned eval version. There were more than 5 times as many wins for the ML Kingsrow as losses.

The ML eval uses a set of overlapping rectangular board regions. These regions are either 8 or 12 squares, depending on whether kings are present. For every configuration of pieces on these squares, a score is assigned by the machine learning process. A position evaluation is then simply the sum of the scores of each region, plus something for any material differences in men and kings. In the 8-square regions, each square can either be empty or occupied by one of the four piece types, so there are total of 5^8 = 390,625 configurations. In the 12-square regions there are no kings, so there are 3^12 = 531,441 configurations.

To compute values for each configuration, a large number of training positions are needed. I created a database of approximately one million games through rapid self-play. Each game took about 5 seconds. The positions are extracted from the games, and each position is assigned the win, draw, or loss value of the game result. Initially the values in the rectangular board regions are assigned random values. Through a process called logistic regression, the values are adjusted to minimize the mean squared error when comparing the eval output of each training position to the win, draw, or loss value that was assigned from the game results.

Similar machine learning techniques have been used in other board game programs. In 1997, Michael Buro described a similar process that he used to build the evaluation function for his Othello program named Logistello. In 2015, Fabien Letouzey created a strong 10x10 international draughts program named Scan using an ML eval, and around this time Michel Grimminck was using a ML eval in his program Dragon. Since then other 10x10 programs have switched to ML evals, including Kingsrow, and Maximus by Jan-Jaap van Horssen. I think that the English and Italian variants of Kingsrow are the first 8x8 programs to use an ML eval.

Ed's new super-strong version of KingsRow is available for free download from his website. Combine that with his 10-piece endgame database, and you'll have by far the strongest checker engine in the world, a fearsome competitor and an incredible training partner.

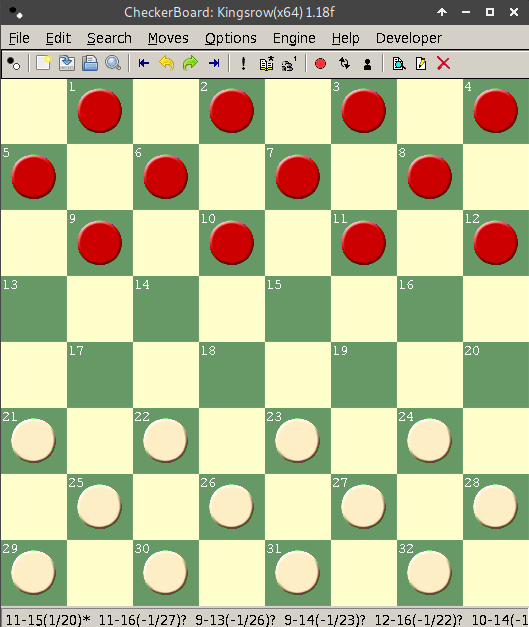

Let's look at a few difficult positions, some of which were analyzed by human players for years and even by reasonably strong computer engines for hours. KingsRow ML solved each and every one of them virtually instantly.

First, the so-called "100 years problem" (as in Boland's Masterpiece, p. 125 diagram 1).

WHITE

White to Play, What Result?

W:WK8,10,21,22,32:B2,13,14,28,K31.

Next, the Phantom Fox Den, from Basic Checkers 2010, p. 260.

WHITE

White to Play, What Result?

W:W16,21,22,23,27,31,32:B1,3,7,10,11,14,20.

And finally, a position suggested by Richard Pask, from Complete Checkers p. 273 halfway down, where Mr. Pask notes: "12-16?! has shock value ..."

WHITE

White to Play, What Result?

W:W17,19,21,22,25,26,27,28,29,31:B2,3,4,9,10,11,13,14,16,20.

Surely we don't expect you to solve each of these (unless you wish to), but do look them over and at least form an opinion. Then click on Read More and be amazed.![]()

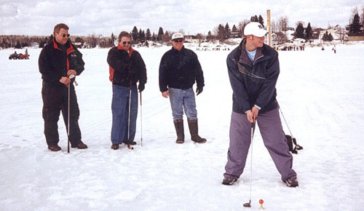

A Winter Stroke

Many golf enthusiasts just can't get enough, and while we don't know how they do it, some of them golf even during the winter when snow covers the ground. They're out taking their "winter strokes" and loving every minute of it.

We have a different "winter stroke" to offer you. We don't know if snow is as yet on the ground where you live--- it might well be in some locations--- but we're pretty sure you've kept the snow off your checkerboard. Here's a stroke problem that will stretch your powers of visualization.

WHITE

White to Play and Win

W:W15,17,18,19,22,26,27,28,30,32:B1,3,6,8,9,10,11,12,13,20.

Solving these problems is par for the course; when you're done, click on Read More to score your solution.![]()

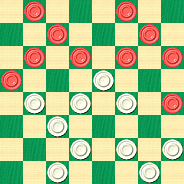

Checker Apps for Android

A few weeks back, we presented Ed Gilbert's review of checker apps for the iPhone. We promised a similar review of Android apps, and we're bringing you that review today.

The iPhone scene was frankly pretty bleak. Ed didn't find a single truly serious checker app for the popular Apple smartphone. We'll cut to the chase: for Android phones, the situation is somewhat better. There are two reasonable candidates for the "serious app" title and a whole host of "toy" apps.

As was the case with the iPhone, there is just too much detail for a single weekly column, so instead you'll find the full details on this separate web page.

The two apps that we think merit consideration are Checkers Tutor, by world class checker programmer Martin Fierz (author of CheckerBoard and the Cake computer engine), and Checkers for Android by programmer Aart Bik, who is best known for Chess for Android. Aart's checker app is free and Martin's app sells for just one dollar.

Martin Fierz and Aart Bik

Interesting and detailed email interviews with both Martin and Aart can be found on the web page linked above.

So, which app should you install? Checkers Tutor plays a reasonable game, certainly well above the casual skill level. The same can be said of Checkers for Android. It's also safe to say that neither of these will provide a serious challenge to a master-level player.

Our usual benchmark is Martin Fierz's Simple Checkers engine. Both Checkers Tutor and Checkers for Android play somewhat better than Simple, at least based on the limited trial matchups conducted in our offices. But you won't get play at the level of Cake or KingsRow, either.

There's some irony here. Both Martin and Aart received a lot of user feedback saying that the engines are too strong and that the user can never win a game! This perhaps says more about the casual player than it does about the strength of the computer engines.

How do Checkers Tutor (CT) and Checkers for Android (CFA) compare with each other?

CT has hands-down the better graphics and display, and some additional play features, such as move take-back and replay, neither of which are present in CFA. Both programs lack a move list or a means of exporting moves. CT has optional square numbers; CFA allows compulsory jumping to be turned off, which you may consider a feature or an anti-feature, but Aart says users demanded it. CT allows for selection of a random 3-move ballot. CFA has a tiny endgame database which most notably will allow it to convert a two kings vs. one win, something a surprising number of programs can't do.

Neither app allows for position set-up, but neither engine is really strong enough to do meaningful analysis.

Which engine plays better checkers? That's a good question. We think CT has a definite edge, albeit not a large one. In the two head-to-head matches that we conducted, CT handily won one of them. CFA got a winning position in the other game but then couldn't figure out the ending and the result was a draw. Such limited testing is hardly decisive, of course.

Our bottom line is that there's little reason not to install both apps and make your own comparison. It will only cost you a dollar, after all, and you'll have a lot of fun ahead of you.

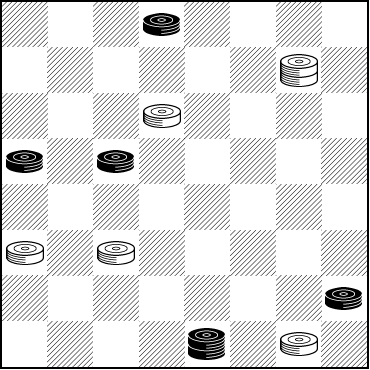

Several of the test games are on the companion page mentioned earlier. What we'll show you here is an excerpt from one of the games between CT and CFA. We'll stop at one of the game's critical points and let you take over.

The game was played on April 18, 2012 at the Hawai`i State Library. Checkers Tutor had Black and played at 15 seconds per move. Checkers for Android had White and played at 10 seconds per move (it wasn't possible to set the same timing for both).

| 1. | 11-15 | 23-19 |

| 2. | 8-11 | 26-23 |

An inferior move; 22-17 or 22-28 are better.

| 3. | 9-14 |

4-8 holds the edge.

| 3. | ... | 22-18 |

A checker subtlety: 22-17 equalizes, while this does not.

| 4. | 15x22 | 25x9 |

| 5. | 5x14 | 29-25 |

| 6. | 11-15 |

4-8 would have maintained the lead.

| 6. | ... | 25-22 |

| 7. | 14-18 | 23x14 |

| 8. | 10x26 | 19x10 |

| 9. | 6x15 | 31x22 |

| 10. | 4-8 | 27-23 |

| 11. | 2-6 | 21-17 |

| 12. | 7-10 | 23-18 |

| 13. | 8-11 | 17-13 |

This move loses; 30-26 is correct. CT now has a win on the board but won't find it. Will you?

BLACK (CT)

Black to Play and Win

B:W32,30,28,24,22,18,13:B15,12,11,10,6,3,1.

Who's better, CT, CFA, or you? Match wits with the computer, then click on Read More to see the solution.![]()

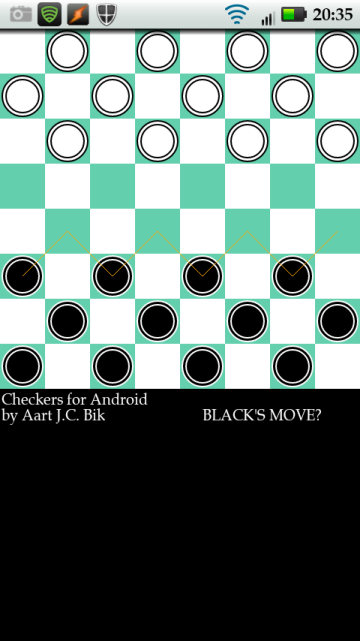

Checkers: Apps for the iPhone

The telephone above is most definitely not an iPhone, but it does seem to pretty well represent the state of the art when it comes to playing checkers on an iPhone.

This article is the first of two on smartphone checker apps. Ed Gilbert, author of the world-class KingsRow checker engine and companion 10-piece endgame database, has graciously given of his time in order to evaluate a group of checker apps for the iPhone. A future article will look into checker apps for Android-based phones.

Ed Gilbert

Photo Credit: Carol Gilbert

Ed's article is extensive and includes large graphics, so it merits its own web page. You can find it here, but we'll give you Ed's bottom line right away: there's not much out there that has merit for the serious player. That's indeed regrettable, because from what Ed shows us, we can't help but conclude that the iPhone app authors could have done much better without a lot of additional effort. Unfortunately, most checker program authors think they are producing toys rather than serious game-playing programs, and that's just what they end up doing.

Let's look at a sample game that Ed ran between the iPhone checker apps "Teeny Checkers" and "Fantastic Checkers".

| Black | Fantastic Checkers |

| White | Teeny Checkers |

| 1. | 11-15 | 22-17 |

| 2. | 9-14 | 17-13 |

25-22 was better.

| 3. | 8-11 | .... |

15-19 would retain the advantage.

| 3. | .... | 25-22 |

| 4. | 4-8 | .... |

Very weak. 11-16 is best.

| 4. | .... | 22-17 |

23-19 would have given a large advantage if not a win.

| 5. | 15-19 | 24x15 |

| 6. | 11x18 | .... |

10-19 was much better and is in fact a book move.

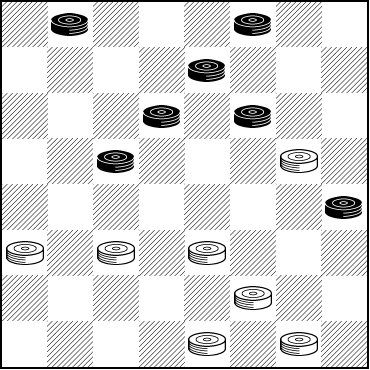

White now has a simple move that very likely leads to a win. Can you spot it?

WHITE

White to Play and Win

W:W32,31,30,29,28,27,26,23,21,17,13:B18,14,12,10,8,7,6,5,3,2,1.

We'll give you the answer and find out how the game progressed when you dial your mouse to Read More.![]()

Taking A Break

Public Domain Pictures CC0

Some of the problems and study material presented in our weekly columns are, to say the least, somewhat challenging for the average player. But we've always tried to a make a point of having something for everyone, so, once again, we're "taking a break" from the really hard stuff and presenting a problem that is interesting, practical, and not so difficult.

BLACK

Black to Play and Win

B:W30,28,26,22,K10:BK31,21,19,14,13,5.

Black is a man up, but not for long. Still, there is a very nice win on the board. Can you see it? We'd rate this problem as no higher than "intermediate" in difficulty, and we're sure the more experienced players will call it "easy." Whatever it may be, can you solve it? The truly easy thing about it: clicking on Read More will lead you right to the solution.![]()

Double Cross in the Double Cross

A few years ago, Ed Gilbert, the author of the KingsRow computer checkers engine, and the creator of the companion 10-piece endgame database, sent some new play to one of checker's sharpest-eyed analysts, Brian Hinkle. Ed told Brian the following:

"The 5-9 24-20 Double Cross is indeed a draw. This is exciting news to me, since this is a new, unknown draw in a ballot that is generally considered by a lot of players to be the most difficult of the 3-move tournament ballots. This morning I loaded the new opening book into Kingsrow and played along the PV. It dropped out of book at the 40th ply into a very interesting position where White had sacrificed a man to gain a first king with a positional advantage."

Ed showed the following line of play:

| 1. | 9-14 | 23-18 |

| 2. | 14x23 | 27x18 |

| 3. | 5-9 | 24-20 |

| 4. | 10-15 | 28-24 |

| 5. | 7-10 | 21-17 |

| 6. | 3-7 | 17-13 |

| 7. | 9-14 | 18x9 |

| 8. | 15-18 | 22x15 |

| 9. | 10x28 | 9-5 |

| 10. | 11-16 | 20x11 |

| 11. | 8x15 | 31-27---A |

| 12. | 4-8 | 25-22 |

| 13. | 8-11 | 29-25 |

| 14. | 11-16 | 25-21 |

| 15. | 16-20 | 21-17 |

| 16. | 7-11 | 27-23 |

| 17. | 12-16 | 13-9 |

| 18. | 6x13 | 17-14 |

| 19. | 2-6 | 14-9 |

| 20. | 6-10 | 9-6---B |

A---25-22 4-8 31-27 same.

B---This is the end of the computer's opening book moves. Note that Ed constructed a special opening book that examined the Double Cross in great depth and detail.

Ed comments further, "Every black move from 24-20 up to move 13 is forced."

Here is the position at the end of the KingsRow specialized Double Cross opening book.

BLACK

Black to Play and Draw

B:W32,30,26,23,22,6,5:B28,20,16,15,13,11,10,1.

Finding the rest of the solution is not an easy task, but you owe it to yourself to give it a try. The solution is not long, but it is very surprising, perhaps ranking among the most surprising things we've ever seen on the checkerboard. After you've done your analysis, click on Read More to see the truly stunning conclusion.![]()

The Checker Maven is produced at editorial offices in Honolulu, Hawai`i, as a completely non-commercial public service from which no income is obtained or sought. Original material is Copyright © 2004-2026 Avi Gobbler Publishing. Other material is public domain, AI generated, as attributed, or licensed under CC1, CC2, CC3 or CC4. Information presented on this site is offered as-is, at no cost, and bears no express or implied warranty as to accuracy or usability. You agree that you use such information entirely at your own risk. No liabilities of any kind under any legal theory whatsoever are accepted. The Checker Maven is dedicated to the memory of Mr. Bob Newell, Sr.